Server Processors: Overview and Forecast to 2025

In an industry where performance and energy efficiency directly determine competitive advantage and operating costs, choosing a server processor is a strategic decision. By 2025, the server processor market will be characterized not just by technical rivalry, but by fundamentally different architectural design philosophies among key players. While classic x86 solutions from AMD and Intel continue to evolve, offering record-breaking multithreading and specialized acceleration, alternative ARM architectures are firmly establishing themselves in specific niches, offering unprecedented efficiency.

This material provides a comprehensive technical analysis of modern server CPUs, their platforms, and optimal application scenarios, necessary for forming a balanced investment and operational strategy for data centers and professional workstations.

Introduction

The modern server landscape has been radically transformed. While Intel Xeon’s dominance was once the virtually undisputed standard for data centers, AMD’s return to the high-performance market in 2017 ushered in a new era of competition. Today, AMD EPYC is not just catching up, but in many ways setting new benchmarks, particularly in multi-threaded workloads and compute density.

Meanwhile, a third force is gaining momentum: ARM-based processors, which have migrated from the mobile segment to server racks, promising a revolution in performance per watt. This review aims to provide a clear, marketing-free comparison of the key platforms of 2025: AMD EPYC, Intel Xeon, and promising ARM solutions, focusing on the practical benefits for decision makers in infrastructure procurement and deployment.

Where does the data come from?

The analysis is based on aggregating data from authoritative industry sources, including technical reviews, official vendor specifications, and independent benchmark results. Key sources included publications from expert IT publications and hosting providers (such as King Servers and Itelon), which provide practical implementation cases.

Data from resources specializing in hardware benchmarking (such as CPUBenchmark.net) and official press releases from manufacturers were also considered. All conclusions and recommendations are focused on the current market situation in 2024-2025 and aimed at maximizing value for end users when planning infrastructure projects.

Processors

Intel

Intel, long synonymous with the word “server processor,” continues to evolve its flagship Xeon lineup, focusing on a mature ecosystem, strong single-threaded performance, and built-in hardware acceleration for specific workloads.

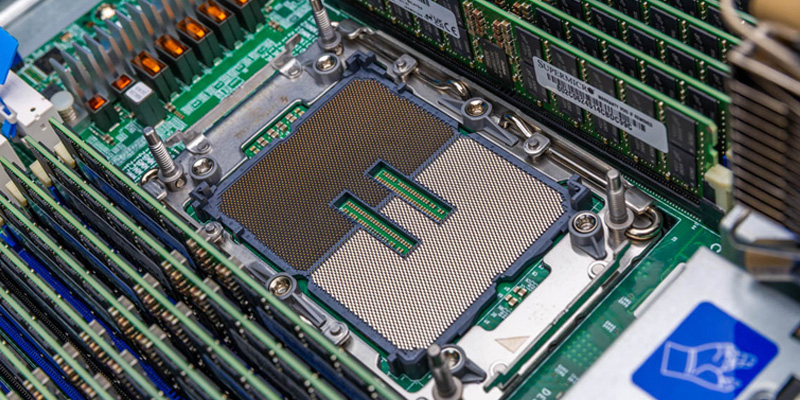

Intel Xeon Scalable Server

The current generation of Intel Xeon Scalable processors, represented by the Sapphire Rapids and Granite Rapids architectures, remains a cornerstone for many enterprise environments. Manufactured on Intel’s 7 (10 nm) process technology, these processors offer up to 60 high-performance cores (P-cores) and 120 threads per socket in Sapphire Rapids configurations. Their key feature is not only raw computing but also a rich set of integrated engines: Intel Advanced Matrix Extensions (AMX) for AI inference and machine learning, Intel QuickAssist Technology (QAT) for accelerating data encryption and compression, and Intel Data Streaming Accelerator (DSA).

This makes Intel Xeon the preferred choice for tasks where not only multithreading is critical, but also optimization for specific, often legacy, enterprise applications, such as SAP HANA or complex databases. In multi-socket configurations (up to 4-8 processors), the Xeon platform demonstrates proven reliability and well-established interaction mechanisms, which is crucial for vertical scaling of large monolithic systems.

Intel Xeon W

The Intel Xeon W series is targeted at the high-end professional workstation market, which requires maximum computing power within a single- or dual-socket platform. These processors, such as the W9-3595X, combine Xeon architectural advantages (ECC memory support, enterprise management features) with high clock rates.

They are designed for rendering, CAD design, scientific simulations, and data analysis, where both parallel processing and single-threaded responsiveness are crucial. However, independent tests show that in purely computational tasks, such as rendering in V-Ray or Keyshot, AMD’s flagship solutions can demonstrate a performance advantage of over 100%.

Intel Xeon E

The budget-friendly Intel Xeon E series (formerly known as the Xeon E3) occupies the entry-level server and small business market. These processors, often sharing a platform with desktop Core processors, offer a basic set of server features, such as ECC memory support and increased reliability, but at a significantly lower cost of ownership.

They are ideal for file servers, backup systems, low-density virtualization hosts, or office infrastructures that do not require extreme multithreading or advanced scalability.

Intel Core

Using Intel Core processors (especially the flagship i9 series) in server solutions is a niche but existing practice, primarily for specialized tasks or very limited budgets. Such systems lack key enterprise features, primarily ECC memory support, which compromises data integrity under sustained, high loads.

While their use may be justified in test environments, for individual container deployments, or for single-core-critical tasks, for production workloads in the data center, Xeon is the undisputed choice in terms of reliability and fault tolerance.

AMD

AMD made a significant leap into the server market by introducing an architecture that emphasized maximum core count, high subsystem throughput, and energy efficiency. This chiplet-based design strategy allowed AMD to rapidly increase computing density.

AMD EPYC Scalable Server Processors

AMD’s flagship EPYC server platform, introduced in 2025 with the 9004 (Genoa, Bergamo) and 9005 (Turin) series, is the main competitor to Intel Xeon. Its distinguishing feature is a record number of cores and threads within a single socket. For example, the EPYC 9754 model, based on the Zen 4c architecture, offers 128 cores and 256 threads, while the top-end Turin series models offer up to 192 cores.

In a direct comparison, a dual-socket configuration on AMD EPYC can deliver 30-40% more total computing power than a similar configuration on Intel Xeon. Besides cores, the platform’s advantages include 12 DDR5 memory channels per socket (versus 8 on Intel) and 128 PCIe 5.0 lanes. This makes EPYC ideal for highly parallel workloads such as virtualization, cloud environments, in-memory databases, high-performance computing (HPC), and big data processing.

AMD Ryzen Threadripper

For the professional workstation market, AMD offers the Ryzen Threadripper series, and its professional version, Threadripper PRO. These processors, like the flagship Threadripper PRO 9995WX, essentially bring the EPYC philosophy (massive core count, multi-channel memory, and numerous PCIe lanes) to the workstation form factor. With 64-96 cores, support for 8-channel DDR5 memory, and up to 128 PCIe 5.0 lanes, they are unrivaled in rendering, 3D modeling, compositing, and simulation tasks.

According to AMD, Threadripper PRO demonstrates a more than 100% advantage over competing Intel Xeon W solutions in rendering tests (Chaos V-Ray, Luxion KeyShot). This makes it the best choice for creative studios, engineering firms, and research centers.

AMD EPYC 4004

The AMD EPYC 4004 series represents the company’s strategy to capture the entry-level and mid-range single-socket server market. Based on the same Zen 4 architecture as their higher-end siblings but in a more affordable form factor, these processors offer impressive multi-threaded performance for their price point. They are ideal for small and medium businesses, hosting providers, infrastructure deployments (file storage, domain controllers, web servers), and dense containerization, providing excellent price-to-features ratio without the unnecessary overhead of dual-socket platforms.

AMD Ryzen

Using AMD Ryzen desktop processors for server workloads, as with Intel Core, is a compromise. Select Ryzen 9 models with higher core counts may be attractive for home labs, testbeds, or low-power edge solutions. However, the lack of ECC memory support (in most models) and limited reliability and management capabilities make them unsuitable for any mission-critical workload in a commercial data center.

Manufacturers ARM

The ARM architecture, long associated with mobile devices, has made a decisive breakthrough in the infrastructure processor market. Its philosophy, based on energy efficiency and scalability, resonates with major cloud providers and data center operators, for whom electricity represents a significant portion of operating expenses.

Ampere

Ampere Computing is one of the most active proponents of the ARM architecture in the general-purpose server processor segment. Its chips, such as the Ampere Altra and Ampere One, offer up to 128 or more homogeneous high-performance cores without support for technologies like SMT/Hyper-Threading.

This ensures predictable performance and low power consumption. It is estimated that ARM solutions can deliver up to 40% energy savings compared to traditional x86 CPUs in similar cloud and web hosting workloads. Primary applications include scalable web services, container platforms like Kubernetes, caching layers, and microservice architectures, where density and efficiency are critical.

NVIDIA Grace

NVIDIA, a leader in the AI and HPC market, unveiled its Grace ARM processor, designed not as a one-size-fits-all solution, but as a highly optimized platform for artificial intelligence and supercomputing. Grace features a unique architecture with ultra-fast NVLink-C2C communication between the CPU and GPU, which is critical for exascale computing. It is designed to integrate with NVIDIA Hopper and Blackwell accelerators, creating a unified, balanced computing platform for training and inferencing the most complex neural network models.

Amazon Graviton

Amazon Web Services (AWS) demonstrates this ecosystem approach with its Graviton processor. Designed specifically for the AWS Cloud, Graviton (third and fourth generations) offers the best cost and performance optimization for a wide range of AWS services, such as EC2, RDS, ElastiCache, and others. For AWS customers, choosing Graviton instances often translates into direct cost savings of 20-40% with comparable or superior performance for native cloud applications written for ARM.

RISC-V processors

The open and modular RISC-V architecture represents the most ambitious long-term challenge for both x86 and ARM. Its key advantage is the absence of licensing fees and the ability to deeply customize the chip for specific tasks. By 2025, RISC-V is making its first, but confident, steps into the server market. Companies like Ventana Micro Systems are already announcing processors with 192 cores designed for data centers.

For now, the main barrier remains the maturity of the software ecosystem: operating systems, hypervisors, development tools, and business applications require porting and optimization. However, for specific, highly specialized workloads (networking devices, storage systems, accelerators), RISC-V can already offer unprecedented efficiency.

Video cards

In modern high-performance servers and workstations, the processor is only part of the computing equation. Graphics processing units (GPUs) and other specialized coprocessors handle tasks related to parallel data processing, artificial intelligence, scientific simulations, and professional visualization.

NVIDIA

Server GPUs

NVIDIA’s line of server GPUs, led by the Hopper (H100, H200) and Blackwell (B100, B200) architectures in 2025, is the de facto standard for AI and HPC. These accelerators feature thousands of specialized tensor cores for machine learning, high-bandwidth HBM3e memory, and NVLink interconnects for combining multiple cards into a single logical accelerator.

They are critical for training large-scale language models (LLM), recommender system solutions, quantum chemistry computations, and climate modeling. Compatibility with CPU platforms from all major vendors (x86 and ARM) makes them a versatile tool.

Desktop GPUs

The GeForce RTX lineup, including the 5000-series models, dominates the creative workstation market. Technologies like CUDA, OptiX ray tracing, and specialized AI cores (Tensor Cores) accelerate applications for rendering, 3D modeling, simulations, and video editing. Paired with powerful multi-threaded CPUs like AMD Threadripper, they create unrivaled workstation performance for content creators.

AMD

AMD Instinct

The AMD Instinct series of accelerators (MI300X, MI325X) is a direct alternative to NVIDIA data center solutions. Based on the revolutionary CDNA 3 architecture with a chiplet design that integrates CPU and GPU, they offer exceptional performance for HPC and AI workloads. A key advantage is the open ROCm software ecosystem, which provides greater flexibility and control to developers. These cards are suitable for use in supercomputer clusters and large cloud infrastructures seeking vendor diversification.

AMD Radeon

In the professional workstation market, the AMD Radeon PRO line of graphics processors (W7000 and W8000 series) offers reliable performance for CAD, BIM, media content creation, and geoanalysis workloads. Their strengths include reliability, support for multi-monitor configurations, and optimization for specific professional applications.

Intel

After resuming its presence in the discrete graphics market, Intel is offering solutions for servers and workstations with the Intel Data Center GPU Flex and Max Series. These accelerators are targeted at cloud visualization (Cloud Gaming, VDI), media transcoding, and mid-range inference. Their integration with the Intel Xeon platform via technologies like oneAPI provides a unified software model, potentially simplifying development and deployment. Although their market share is still small, they represent an important third force, fueling increased competition.

A modern server or workstation is always a balanced system. The choice of CPU (whether AMD Epyc, Intel Xeon, or ARM) directly impacts the overall system requirements: the number and type of PCIe lanes required to connect the GPU; the memory bandwidth to feed these accelerators with data; and the overall platform architecture. The right combination of processor and accelerator determines the overall efficiency and cost of the solution for the target workload.

A balanced architecture for modern computing systems requires not only a powerful processor but also a deep understanding of how various components—from the chipset and memory controllers to interconnects and storage subsystems—interact with one another. This integration determines whether the system can realize the theoretical potential of its CPU and associated accelerators in real-world data center conditions.

Processor Comparison: AMD EPYC vs. Intel Xeon

The fundamental choice between the two dominant x86 platforms remains a central issue in the design of most infrastructures. A direct comparison of AMD EPYC and Intel Xeon reveals not only differences in technical specifications but also different approaches to solving modern computing problems.

Performance and multithreading

AMD EPYC 9754

The flagship AMD EPYC 9754 processor, based on the Zen 4c architecture, embodies AMD’s strategy for maximum core density. With 128 physical cores and 256 processing threads, it sets a new standard for parallel workloads.

In a dual-socket configuration, the system delivers 256 cores and 512 threads, roughly 30% faster than similar dual-socket Intel Xeon systems in multi-threaded benchmarks. This leap is made possible by the chiplet architecture, which enables cost-effective scaling of core count. This makes the EPYC 9754 and similar models ideal for high-density virtualization, where a single physical host can support thousands of containers or virtual machines; for scalable databases that distribute queries across multiple threads; and for HPC workloads that can be efficiently parallelized.

Intel Xeon Platinum (4-socket)

Intel’s answer to the challenge of multithreading is not only to increase core counts in a single socket, but also to support multi-socket configurations. The four-socket platform on Intel Xeon Platinum delivers an extreme amount of unified cache memory and shared memory address space, which is critical for very large in-memory database deployments like SAP HANA or complex ERP systems.

In this configuration, the system can combine the resources of 240 cores or more. However, it’s important to note that performance gains are not linear with additional sockets due to increased latency in multi-processor memory (NUMA). Therefore, choosing a 4-socket Xeon platform is only justified for a limited number of applications specifically optimized for this architecture and requiring extreme amounts of shared memory, exceeding the capabilities of dual-socket systems.

Architecture and scalability

Memory bandwidth

One of the key architectural differences is the organization of the memory subsystem. The latest generation of AMD EPYC processors are equipped with 12 DDR5 memory channels per socket, while modern Intel Xeon Scalable processors have 8 channels. In practice, this means that an EPYC-based server can deliver 50% higher theoretical peak memory throughput. For applications that intensively access data in RAM—whether databases, analytics platforms, in-memory caches (Redis, Memcached), or scientific simulations—this parameter directly impacts response time and overall system performance. A wider memory bus also helps feed these computing cores more efficiently, minimizing idle time.

Data Protection: Infinity Guard vs. SGX/TDX

Hardware-level security has become a requirement for multi-tenant cloud environments and virtualization. AMD implements its security suite under the Infinity Guard brand, a key element of which is Secure Encrypted Virtualization (SEV). SEV transparently encrypts the memory of each virtual machine using a unique key, isolating the data of one VM from another and even from the hypervisor. Intel offers a similar but different technology called Trust Domain Extensions (TDX), which is part of the broader Software Guard Extensions (SGX) suite. Both technologies are aimed at creating secure enclaves (confidential computing environments), but their maturity, performance, and support in operating systems and hypervisors may vary, requiring additional verification when choosing a platform for a specific project.

PCIe lanes and CXL support

Expansion and peripheral connectivity options are determined by the number and generation of PCI Express lanes. AMD EPYC has traditionally led the way here, offering 128 PCIe 5.0 lanes per socket. 6th-generation Intel Xeon (Granite Rapids) has increased this figure, offering up to 96 PCIe 5.0 lanes plus support for the Compute Express Link (CXL) interface. CXL is a new, rapidly evolving technology that leverages the PCIe bus to create a single, coherent memory space between the CPU, memory, and accelerators.

Intel implemented CXL 1.1/2.0 support in its platforms earlier, which could provide an advantage in building future composite infrastructures. For modern workloads that require connecting multiple NVMe drives, high-speed network cards (100/200 GbE), or multiple GPUs, AMD’s abundance of PCIe 5.0 lanes provides greater scalability.

The key takeaway from the platform comparison is that AMD EPYC is often the best choice for workloads that prioritize maximum compute density, high memory bandwidth, and efficient scaling within a single node. Intel Xeon, on the other hand, maintains a strong position in environments that require maximum single-threaded frequency, rely on integrated hardware accelerators (QAT, AMX), or require vertical scaling in multi-socket configurations for legacy enterprise applications. The right choice is impossible without a clear understanding of the target workload characteristics.

Server platforms

A platform is an ecosystem that defines the capabilities and limitations of a server system beyond the processor itself. It includes the chipset, socket type, support for various memory types, and I/O interfaces. In 2025, the dominant x86 platforms are AMD’s Socket SP5 and Intel’s LGA 4677/Socket E. The AMD SP5 platform, introduced with the EPYC 9004 series, is designed to support multiple processor generations, giving investors confidence in future upgrades without replacing the motherboard.

It provides support for all key modern technologies: 12 DDR5 channels, 128 PCIe 5.0 lanes, and the Infinity Fabric interconnect for inter-socket communication in dual-processor configurations. Intel’s platform for 4th and 6th-generation Xeon Scalable processors has also transitioned to DDR5 and PCIe 5.0, but with a focus on accelerator integration and early support for the CXL standard for composite systems. Choosing a platform is a long-term decision that determines the infrastructure development roadmap for the next 3-5 years.

Memory

The evolution of the memory subsystem is one of the main drivers of performance growth in modern systems.

DDR5

By 2025, the DDR5 standard will become ubiquitous for new server deployments. Compared to DDR4, it offers not only higher frequencies (4800 MT/s and above) but also a fundamentally different architecture: each module has two independent 40-bit channels (32 data bits + 8 ECC bits), increasing effective bandwidth. Error correction code (ECC) support is built into the standard, which is critical for data integrity in the data center. Upgrading to DDR5 is mandatory for both AMD and Intel platforms, increasing initial costs but laying the foundation for improved performance.

DDR6

Although the DDR6 standard is actively being developed, its widespread adoption in server systems is not expected until late 2025 or 2026. It is expected to deliver another significant leap in bandwidth and energy efficiency. Those planning major infrastructure investments should consider this technology cycle when assessing deployment timelines and equipment lifecycles.

CXL

Compute Express Link (CXL) isn’t a new memory type, but a revolutionary protocol built on the PCIe 5.0/6.0 physical layer. It allows the processor to view memory installed on other devices (other CPUs, accelerators, specialized modules) as part of its unified address space with low latency.

This paves the way for the creation of “shared memory pools” in data centers, which can be dynamically allocated to different servers and workloads, dramatically increasing resource utilization. In 2025, CXL 1.1 and 2.0 will be actively implemented, primarily on the Intel platform, but AMD has also announced support in future generations.

Disks

Data access speed on storage devices often becomes a bottleneck. The NVMe over PCIe 5.0 interface, supported by modern server platforms, doubles the throughput compared to PCIe 4.0, allowing high-performance SSDs to achieve read/write speeds measured in tens of gigabytes per second.

For maximum performance and fault tolerance, RAID configurations using NVMe drives are used, which requires the processor and platform to have a sufficient number of high-speed PCIe lanes. The evolution of data storage standards is directly linked to the evolution of server processors and their I/O capabilities.

Practical use cases

Theoretical comparisons only make sense when applied to specific business tasks. Below is an analysis of the optimal processor selection for typical scenarios.

Highly loaded web servers

For web services, API backends, and content delivery networks (CDNs), the ability to handle tens of thousands of concurrent connections and low latency are key. Processors with a large number of cores and threads are advantageous here, as each connection or request can be efficiently handled by a separate thread.

AMD EPYC, with its high core density and wide memory bus, enables processing more queries per second on a single server. ARM processors like Ampere Altra are also ideal for this task due to their energy efficiency and predictable per-core performance, which can lead to significant economies of scale for a large data center.

Virtualization and containerization

Creating dense virtual environments is where AMD EPYC excels. The number of virtual machines or containers that can be hosted on a single physical host is directly proportional to the number of available CPU threads and memory capacity. A 96- or 128-core EPYC can host 50% or more virtual machines than a 60-core Xeon on a comparable server, directly reducing hardware costs, hypervisor licensing (if licensed per socket, not per core), and rack space. Hardware-based VM encryption technologies (SEV/TDX) add an additional layer of security for multi-tenant environments.

Databases and analytics

The choice for database management systems (DBMS) depends on the DBMS type. For transactional OLTP systems (e.g., PostgreSQL, MySQL), which scale well to multiple parallel connections, multi-threaded AMD EPYC processors and a large number of memory channels for fast access to indexes and the buffer pool are again the winners. For large analytical (OLAP) data warehouses and in-memory DBMSs (e.g., SAP HANA), overall memory bandwidth and capacity are critical. Here, both high-channel EPYC and multi-socket Xeon configurations can be used to achieve maximum unified memory capacity.

Network services and encryption

For tasks such as software-defined networks (SDN), virtual routers (vRouter), firewalls, and VPN gateways, not only CPU performance is important but also the availability of specialized hardware accelerators. Intel Xeon with integrated Intel QuickAssist Technology (QAT) offers significant acceleration of encryption/decryption and data compression operations, offloading the central cores and increasing overall system throughput. For these applications, Xeon may be preferable, even if it has fewer cores.

Conclusion

By 2025, the server processor market will have reached a new level of maturity and diversity, where there is no longer a single “best” solution for everyone. AMD EPYC has established itself as a leader in multi-threading, memory bandwidth, and power efficiency, offering maximum compute density for modern cloud and virtualized environments.

Intel Xeon maintains a strong position thanks to a mature ecosystem, high single-threaded performance, and a unique set of integrated accelerators for AI, encryption, and networking. Meanwhile, the ARM architecture, represented by players like Ampere, NVIDIA, and AWS, is emerging as a powerful alternative for energy-efficient, scalable cloud and web workloads, promising significant total cost of ownership savings.

The key trend for the near future is further specialization. Processor selection will increasingly be determined not by abstract benchmark scores, but by a fine-tuned match to specific workload characteristics: memory access patterns, degree of parallelism, latency and security requirements, and overall deployment cost (TCO).

The introduction of technologies like CXL and the closer integration of CPUs with GPUs and other accelerators will blur the boundaries between individual components, transforming the server into a single, highly optimized computing platform. IT architects and decision makers are now more critical than ever to conduct a thorough analysis of workloads and build a flexible, adaptable infrastructure that can effectively leverage the strengths of each available architecture.

Frequently Asked Questions (FAQ)

- Question: What is more important when choosing a server processor: the number of cores or the clock speed?

- Answer: Priority depends on the task. For parallelizable workloads (virtualization, rendering, web servers), the number of cores and threads is critical. For legacy or poorly parallelizable applications (some monolithic ERP systems, old databases), a high single-core frequency is more important. In most modern server scenarios, the former prevails.

- Question: Is it true that ARM processors are much more energy efficient than x86, and should you consider them for your data center?

- Answer: Yes, ARM processors deliver up to 40% better performance per watt for suitable workloads (web services, containers, caches). However, their implementation requires checking the compatibility of the entire software stack, as not all applications have native ARM versions. This is an excellent choice for horizontally scalable, cloud-native environments, but can be challenging for environments with legacy software.

- Question: How does the choice of processor affect the cost of software licensing (e.g. VMware, Microsoft SQL Server)?

- Answer: Critically. Many enterprise software is licensed by the number of physical or virtual cores. Therefore, installing a server with 128 AMD EPYC cores can result in huge licensing costs compared to a server with a 64-core Intel Xeon, even if the former is more powerful. Before purchasing hardware, be sure to analyze the licensing model of the target software.

- Question: Is it possible to use desktop processors (Intel Core / AMD Ryzen) in servers?

- Answer: Technically, yes, for testbeds, home labs, or non-critical tasks. This is highly discouraged for production environments. Server CPUs (Xeon, EPYC) have essential features: ECC memory support for error correction, a large number of PCIe lanes for expansion, advanced reliability features (RAS), and a long support cycle. Their absence in desktop chips compromises stability and data integrity.

- Question: What is CXL and why is it important for the future?

- Answer: Compute Express Link (CXL) is an open high-speed communication standard that enables CPUs, memory, and accelerators to efficiently share resources with low latency. In the future, CXL will enable the creation of “composite” servers, where memory and acceleration resources are dynamically allocated from shared pools, dramatically increasing the flexibility and utilization of data center infrastructure. This is one of the key emerging technologies.