By the end of 2025, the industry had passed an inflection point: the CAPEX for operating models (Inference) exceeded the cost of training them for the first time. However, the introduction of Dense 400B+ models (e.g., Llama 3.1 405B) into the commercial sector encountered harsh physics: the 80 GB of memory, standard for the H100 era, became a bottleneck, killing the project’s economics.

Below is an analysis of why memory has become more important than flops, and an honest look at the B200 and MI325X specifications without marketing embellishments.

Anatomy of a Bottleneck (Memory Wall)

In LLM inference, we compete for two metrics: Time To First Token (TTFT) and Inter-Token Latency (ITL).

The main rule of the architect: hot data (Weights + KV Cache) must live in HBM.

If the model doesn’t fit into VRAM and CPU Offloading begins, disaster occurs:

- Bandwidth gap: We’re dropping from the HBM3e bus (~8 TB/s) to the DDR5 bus (~400–800 GB/s per socket). The difference is 10–20 times.

- Generation collapse: The rate drops from 100+ tokens/sec to 2-3 tokens/sec. This is unacceptable for interactive chatbots, copy-palettes, or real-time RAG systems.

KV Cache: The Hidden Memory Eater

Many people forget that the model’s weight specification (for example, ~810 GB for 405B in FP16) is only half the problem. The other half is the Context Window .

With a context of 128k tokens and a batch size of 64, the KV cache can occupy hundreds of gigabytes.

- Reality: Even with aggressive KV cache quantization (FP8), on long contexts it starts to displace model weights.

- Consequence: You don’t just need to “accommodate the model,” you need a headroom of 30-40% of memory specifically for the dynamic cache of user sessions.

Spec Battle: B200 vs. MI325X (Fact Check)

The market responded by releasing chips with increased memory density. However, it’s important to distinguish actual datasheets from presentation slides.

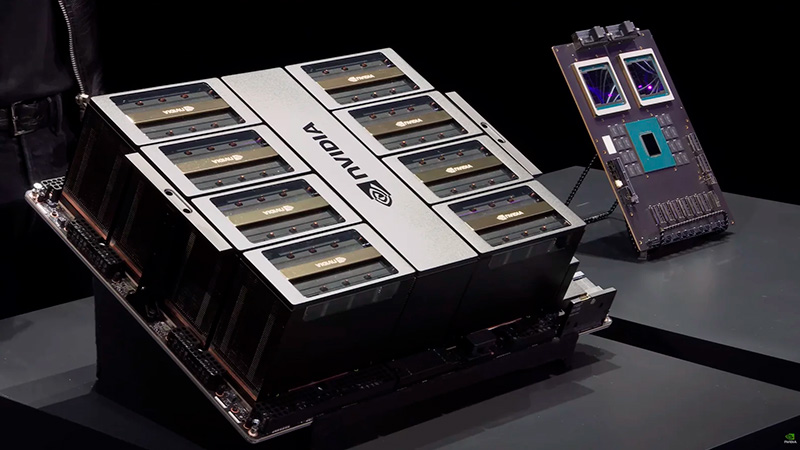

1. Nvidia B200 (Blackwell)

Marketing claims 192GB HBM3e.

Engineering reality: The chip actually physically holds 192 GB (eight stacks of 24 GB). However, in production products (HGX/DGX), the user often has access to 180 GB. The remaining 12 GB are reserved for ECC and yield improvements.

- Verdict: This is a great boost over the H100’s 80GB, but the 400B+ models on a single node (8x GPUs) will still require careful sharding planning.

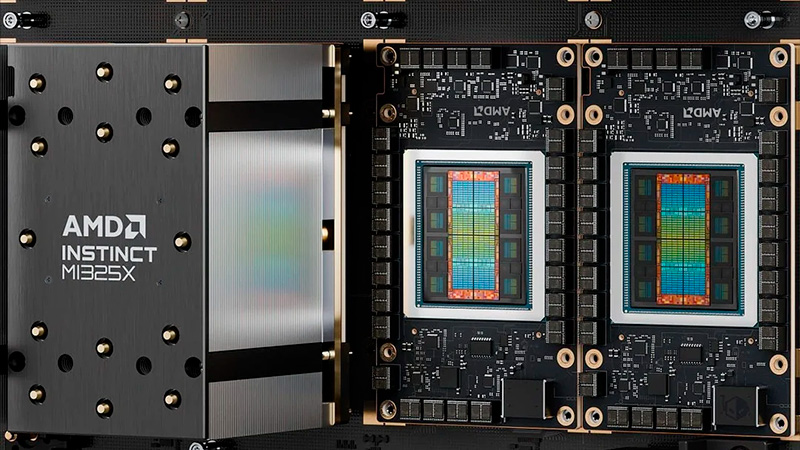

2. AMD Instinct MI325X

The erroneous figure of 288 GB often appears online.

Engineering reality: The MI325X (the current flagship until late 2025) is equipped with 256 GB of HBM3e storage. The 288 GB figure refers to the next generation (CDNA 4 architecture), which is not yet commercially available.

- Verdict: Even with 256 GB, AMD wins in terms of Memory per GPU . This allows for Llama 3.1 70B to be run entirely on a single card with ample headroom for context, or 405B on four cards instead of eight.

| Characteristic | Nvidia B200 | AMD Instinct MI325X |

|---|---|---|

| Memory (Usable) | ~180 GB HBM3e | 256 GB HBM3e |

| PSP (Bandwidth) | ~8 TB/s | ~6 TB/s |

| Use case scenario | Maximum performance (FP4/FP8), proprietary CUDA stack. | Maximum capacity (RAG, Long Context), open ROCm stack. |

Trends 2026: Fighting Inter-GPU Link

Why are we fighting so hard for memory capacity on a single chip?

To run a GPT-4/5 level model, we use Tensor Parallelism, “slicing” the model layers between 8 or 16 GPUs.

The problem is that transferring data between cards (even via 5th-generation NVLink) adds latency. The fewer cards needed to support a model, the faster the inference and the lower the total cost of ownership (TCO). In 2026, the winner won’t be the one with the most FLOPS, but the one that can run a “fat” model on the smallest amount of silicon.